Advanced AI technology:

Delivers accurate scores earlier at unbeatable scale

150B+

Transactions a year

99.9999%

Uptime

100-120 ms

Average in the cloud

What makes Brighterion different?

Proven

Brighterion’s decades of experience in developing AI technology to fight financial crime includes powering Mastercard’s proven fraud scoring system, Decision Intelligence. Our patented Smart Agents technology enables exceptional personalization, accuracy, adaptability and greater insights across data.

Personalization: Highly personalized functionality provides updated intelligence on any entity, such as a card, account or merchant

Accuracy: Dramatic decrease in false-positive rates and higher detection rates

Segmentation: Identification of customer segments exhibiting low fraud occurrences

360-degree insights: Enablement of cross-channel anomaly detection, prediction and analysis

Intelligence

Brighterion has leading expertise in extracting intelligence from high frequency data. Building on Mastercard’s unique position in the payments world, we have the advantage of using proprietary network intelligence to enhance precision and detection above and beyond our clients’ own datasets.

Fraud and loss data: Gain valuable insights regarding consumer fraud and under-reported chargeback claims.

Network Intelligence: Brighterion integrates network intelligence to protect the payments ecosystem. Using these insights, we can extract far superior intelligence than other models.

Modeling

Our full-stack, state-of-the-art modeling allows us to create off-the-shelf machine learning solutions that are production-ready today. Brighterion also develops custom models in 6-8 weeks using our proprietary suite of data science, machine learning and ensemble technologies.

- Business and data understanding

- Data preparation and modeling

- Evaluation and optimization

The proprietary machine learning includes features engineering, model generation and ensemble tools to build an optimal model across segments.

Deployment and scale

The unrivaled performance and scalability of Brighterion’s advanced AI solutions allow for easy deployment in the cloud or on-premises. Our industry-leading, highly scalable models are proven while in production and achieve lightning-fast response times with virtually no downtime.

High frequency: Billions of transactions scored per month

Resiliency: Resilient architecture allows for 99.9999% uptime

Cost savings: Models can be deployed in the cloud or on-premises

Lightning fast: Millisecond response times

Data responsibility

Brighterion adheres to our industry-leading data responsibility practice with embedded model governance and product design principles. We are committed to responsible, human-centered data innovation and product design, guided by our AI Governance framework for all customer engagements.

Security and privacy: Best-in-class security and privacy practices

Transparency and control: Clear and simple explanation of decisions through model explainability layers

Accountability: Individuals and their rights are always at the center of data practices

Innovation: Constant data innovation that benefits individuals through better experiences, products and services

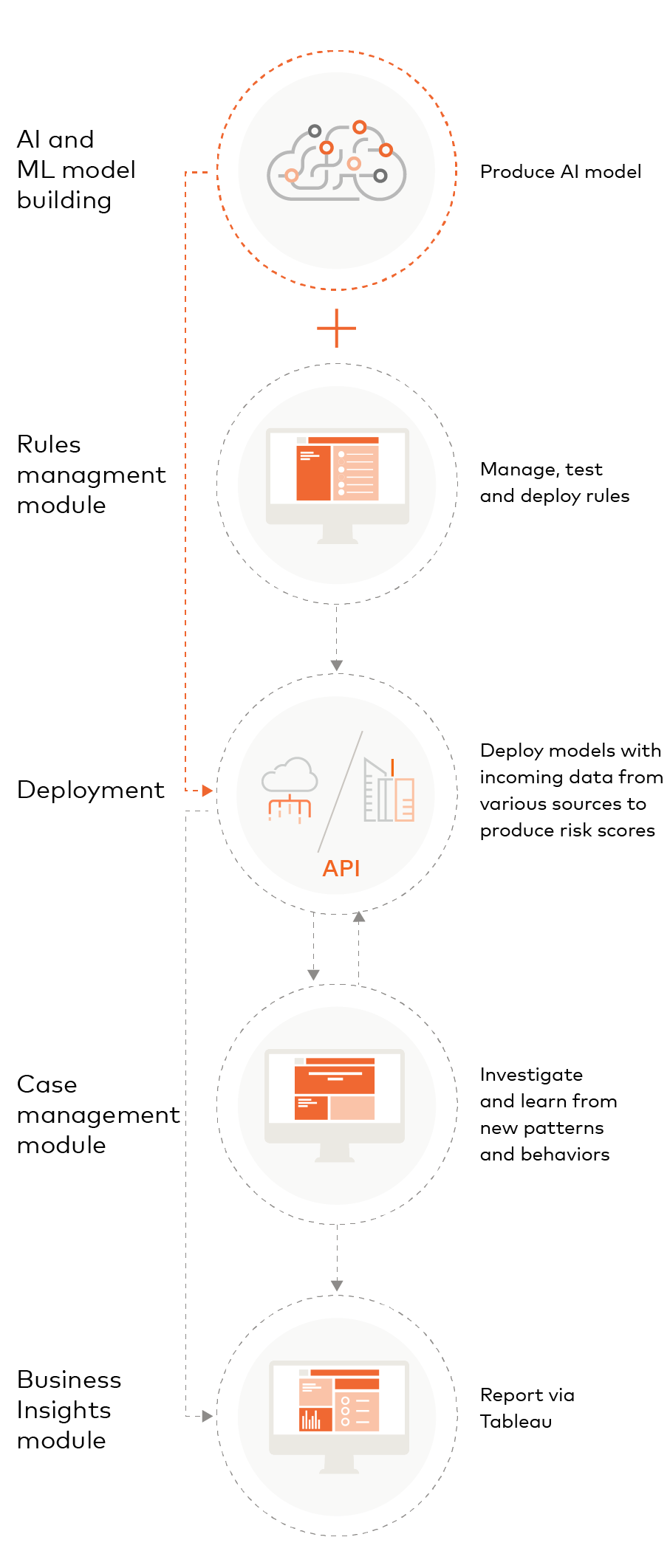

Brighterion’s advanced AI technology

Brighterion offers a scalable, end-to-end AI solution with exceptionally accurate scoring. These scores can be complemented with powerful, user-friendly modules, including Rules Management, Case Management and Business Insights.

Customizable modules

Rules management

Write, test and manage business rules using an intuitive user interface.

Create and edit rules

- Determine if transactions are suspicious, fraudulent or genuine

- Build on any input field or derive element in the system

Profile transactions

- Define patterns to detect suspicious behavior in real-time

- Sort types of profiling: Real-time, long-term, geo-location and more

- Define any time window for aggregation

Rule testing

- Test rules on historical data to simulate performance prior to deploying

- Analyze rule performance while in production and before deployment

- Assess number of alerts generated by each rule, true positive and false positive

Authentication

- Authenticate via your existing MC Connect account or via SSO with your identity service provider

Case management

Investigate and evaluate flagged transactions in an intuitive user interface.

Case management and administration

- Create cases for alerts or dismiss alerts

- Investigators can work on cases from start to finish

- Assign users, priority, status, tasks to cases

- Input resolution results to close cases: Not fraudulent, stolen, counterfeit

Reporting

- Generate management information reports

Audit and traceability

- Review all activity in the module

Users, groups and roles

- Administer and create permission profiles, assign users to groups, create and manage users

- Authenticate via your existing MC Connect account or via SSO with your identity service provider

Business insights

Business intelligence and customizable reports on the go.

Self-serve analytics

- Create customizable reports across any payment/account portfolio

- Define key performance indicators

Standardized reporting

- Use pre-packaged report templates

- Create custom or premade report templates for team use

Actionable intelligence

- Improve decision-making in real-time

- Fine-tune rule performance

Aggregated Metrics

- Define level of detail based on role and need

- Create views across financial institutions (issuers, processors and gateways)